AI agent budgets are under much more scrutiny in 2026. That is a healthy shift.

A year ago, many teams approved pilot spend because the upside felt obvious. In 2026, buyers want a different standard. They want to know what the first version will cost, what the monthly run rate will look like, where hidden expenses appear, and how quickly the deployment can move from interesting demo to measurable business value. That pressure is coming from both sides of the market. Microsoft cites IDC research showing an average 3.7x return on AI investment overall, while IBM reports that only 25% of AI initiatives have delivered expected ROI, and only 16% have scaled enterprise-wide. Deloitte adds another useful reality check: 15% of organizations already report significant, measurable ROI from generative AI, while 38% expect to achieve it within 1 year.

For US companies evaluating agents in 2026, the right question is rarely “How much does an AI agent cost?” The better question is “What architecture, governance model, and workflow scope produce the right cost-to-outcome ratio for our use case?” That framing matters because two agents can look similar in a product demo and still incur very different costs when you factor in integrations, security, monitoring, retrieval, human review, and model usage. OpenAI’s current tooling reflects that complexity directly: the Responses API now supports built-in tools such as web search, file search, computer use, code interpreter, and remote MCPs, while the Agents SDK is designed for multi-agent handoffs, orchestration, and tracing. Each added layer can improve business value, but it also expands the budget envelope.

Why understanding AI agent cost matters in 2026

In 2026, agent pricing is less about a single line item and more about the total cost of ownership. A business may begin with model inference as the focal point, then discover that the higher costs come from workflow integration, internal knowledge setup, access controls, testing, and ongoing support. That is especially true in the US enterprise market, where procurement teams increasingly seek auditable controls for privacy, ownership, and security. OpenAI states that business customers own and control their data and that business data is not used to train models by default. Anthropic also offers US-only inference for workloads that need to run in the United States, with a 1.1x pricing uplift on input and output tokens.

That makes cost planning important for two reasons. First, it reduces surprise spend after launch. Second, it improves the quality of ROI modeling. Many failed AI projects do not fail because the model is weak. They fail because the implementation budget covered the assistant but not the operating model that supports it. NIST’s Generative AI Profile is useful here because it emphasizes risk management across design, development, use, and evaluation, which, in practice, means that compliance, validation, monitoring, and controls should be built into the plan from the start.

US AI agent development costs in 2026

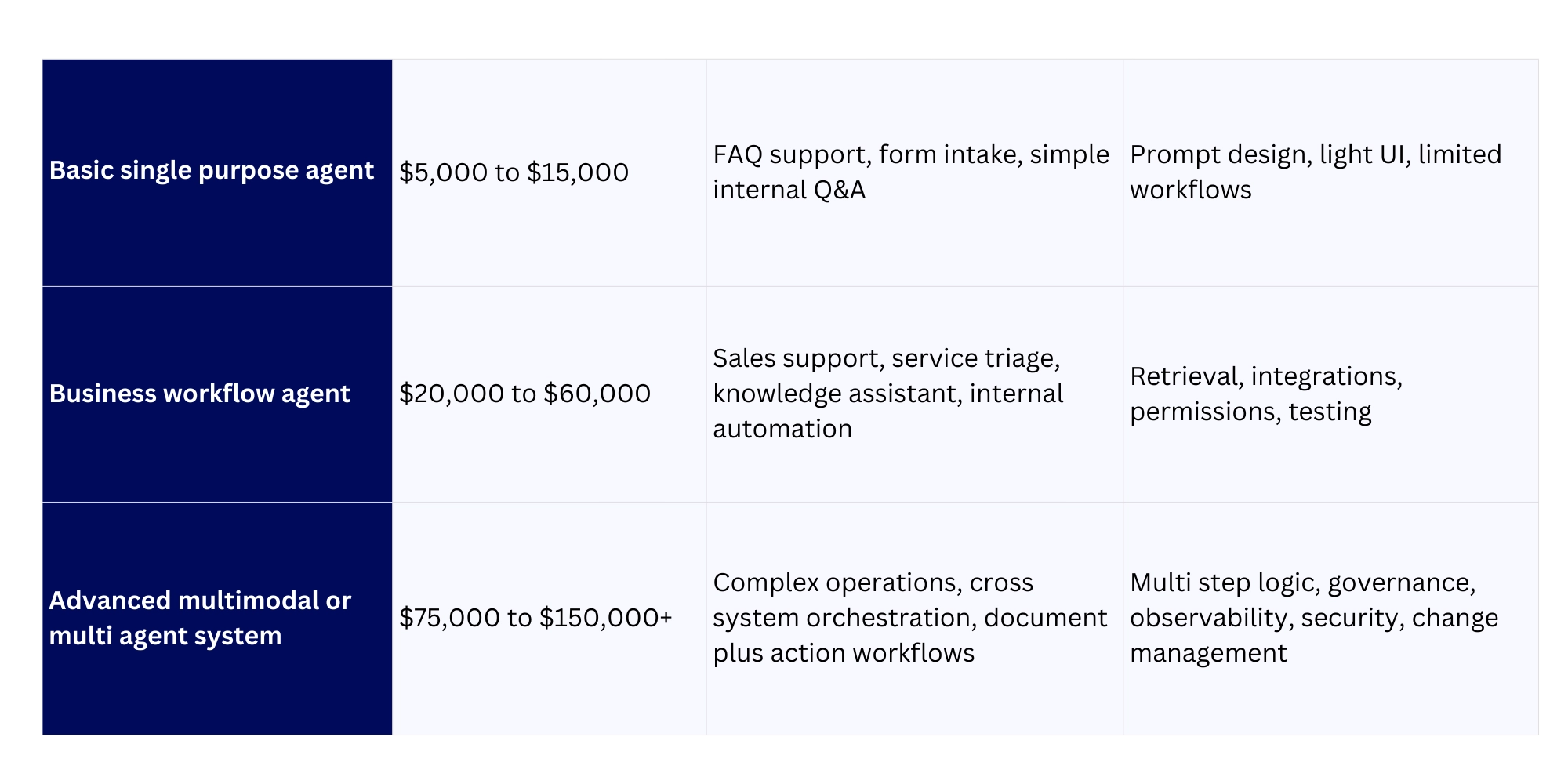

The most realistic planning range for US businesses in 2026 is broad. A simple task-specific agent can start around $5,000 to $15,000. A more capable business workflow agent with integrations and retrieval often lands in the $20,000 to $60,000 range. A multi-system, enterprise-grade agent or multi-agent architecture can move into $75,000 to $150,000+, depending on security, data sensitivity, orchestration depth, and rollout scope. Those ranges align directionally with recent 2026 market guides, but they should be treated as planning benchmarks rather than universal market prices.

Here is a practical view of those ranges:

A customer support agent sits toward the lower to middle end when answering common questions from a limited knowledge base. The cost rises once it needs CRM actions, escalation rules, sentiment handling, multilingual output, and analytics. An automation agent that retrieves internal files, validates data, writes notes into systems, and triggers workflows usually falls in the middle range. A multimodal enterprise agent that reads documents, reasons across systems, performs actions, and operates with strict approval rules moves into the upper range because the delivery scope looks more like software implementation than chatbot setup.

The biggest factors driving AI agent development cost

1. Agent complexity

A single agent that answers bounded questions is cheaper than a system that routes work across specialized agents. OpenAI’s Agents SDK explicitly supports handoffs between specialized agents and full tracing of what happened, which is powerful for production use but adds orchestration work and test surface area. More steps, more decision logic, and more fallback states typically mean more engineering hours.

2. Model selection

Model choice changes both build economics and operating economics. Anthropic’s Claude Haiku 4.5 is priced at $1 per million input tokens and $5 per million output tokens, with prompt caching options and a 1.1x uplift for US-only inference. OpenAI’s current pricing page shows examples such as GPT Realtime 1.5 at $4 per million input tokens and $16 per million output tokens, with higher pricing for audio workloads. Those differences may look small in prototype mode, but they matter at scale. A high-volume support agent or research agent can generate meaningful monthly variance just from model routing decisions.

3. Integrations and action depth

Agents become more valuable when they work within business systems, but that is also where costs rise fastest. A read-only knowledge assistant is easier to ship than an agent that touches CRM, ERP, ticketing, email, internal docs, and approval systems. OpenAI’s Responses API supports built-in tools and remote MCPs, which expand what agents can do, but every added tool adds integration work, permissions handling, and testing.

4. Retrieval and knowledge infrastructure

Many production agents need retrieval augmented generation rather than raw prompting. That introduces vector search, storage, indexing, refresh pipelines, and permissions-aware retrieval. Pinecone’s current pricing shows a $50 per month minimum for Standard and a $500 per month minimum for Enterprise, with Enterprise features such as audit logs, private networking, service accounts, and customer-managed encryption keys. Those are meaningful costs for serious deployments, especially when data volumes and read activity grow.

5. Security, privacy, and compliance

US companies increasingly evaluate AI purchases through a governance lens. OpenAI’s enterprise privacy commitments and Anthropic’s US-only inference option are useful examples of the controls buyers now expect. NIST’s Generative AI Profile reinforces that governance must cover the full lifecycle, meaning policy, logging, monitoring, testing, and review are part of the build budget rather than optional extras.

Cost breakdown from build to deployment and beyond

Most AI agent budgets are easier to manage when broken into phases.

Discovery and design

This includes workflow mapping, business case definition, success metrics, governance requirements, data source selection, and architecture choice. For many companies, this is where cost optimization begins because it prevents overbuilding.

Development

This covers prompt and workflow design, orchestration logic, integrations, retrieval setup, UI or chat surface, testing, and admin controls. In a simple build, this is the largest visible line item. In a complex build, it may be only one part of the total.

Deployment and validation

This includes user acceptance testing, guardrail tuning, policy review, analytics setup, rollout planning, and internal documentation. Teams often underestimate this phase, especially when the agent is client-facing or action-taking.

Ongoing operations

This includes model usage, infrastructure, vector database fees, observability, bug fixing, prompt updates, retraining or reindexing, security reviews, and support. This is where many early budgets prove incomplete. IBM’s research on ROI is relevant here because it highlights that many organizations still struggle to convert AI investments into scalable value. Operational discipline is a major reason why.

A helpful way to think about monthly run costs is in three buckets: model usage, workflow infrastructure, and support. Even where inference is cheap, support and governance can still dominate the monthly operating picture.

Pricing models compared

The 2026 US market has three main pricing patterns.

Custom development

This is still the best fit for companies with differentiated workflows, proprietary data, or strict governance requirements. The upside is flexibility. The tradeoff is higher upfront spend and a more involved implementation cycle.

Subscription and platform pricing

This is attractive when speed matters and the use case aligns with the platform’s operating model. Microsoft Copilot Studio, for example, is sold as a tenant-wide license that includes capacity packs of 25,000 Copilot Credits priced at $200 per pack per month. Salesforce Agentforce offers flexible pricing options, including consumption-based, conversation-based, and per-user pricing. These models reduce initial build friction, but they can become expensive when action volume, complexity, or seat count increases.

No code and low code approaches

These can be a good fit for lightweight internal automation or early pilot stages. They usually reduce launch time, though they may limit architectural control, portability, and deeper customization later. For some firms, the right answer is hybrid: use a platform for the first layer and custom development for higher value or more sensitive workflows.

A practical ROI model for AI agents

The best ROI cases in 2026 come from narrow use cases with clear unit economics. Microsoft cites IDC research showing an average 3.7x return on AI investments, while top leaders in that dataset reported an average ROI of $10.3 for every dollar invested. Those numbers are compelling, but they should be read alongside more conservative evidence from IBM and Deloitte showing that broad-market ROI remains uneven and often depends on execution quality.

A practical ROI formula looks like this:

Annual value created = labor hours saved + revenue uplift + error reduction + cycle time gains – annual operating cost

For example, imagine an internal operations agent who saves 1,000 hours per quarter across service, project coordination, and reporting work. Add fewer manual errors, faster case resolution, and better SLA performance, and the annual value can quickly exceed the build cost. But that result only holds if the workflow is adopted consistently and measured properly. AI projects with vague ownership or weak metrics rarely produce board-ready ROI.

How CT Labs helps companies control costs and improve outcomes

The strongest AI agent programs are designed around business outcomes first, then matched to the right delivery model. That is where CT Labs can create real value.

Instead of pushing every client toward a custom build, CT Labs can help define which workflows deserve custom engineering, which can run on platform economics, and where a lower-cost pilot makes sense before broader rollout. That matters in 2026 because many businesses are facing an overcrowded market of models, tools, and agent platforms, each with distinct pricing mechanics and trade-offs in data handling, orchestration, and governance. Official pricing from OpenAI, Anthropic, Microsoft, Salesforce, and Pinecone already shows how quickly cost structures diverge once real usage begins.

CT Labs can also bring discipline to ROI modeling. That includes workload selection, architecture right-sizing, vendor mix, governance design, and cost tracking from pilot through production. For US buyers, that combination of build discipline and compliance awareness is often what determines whether an agent becomes a scalable asset or a costly experiment.

FAQ

How much does it cost to build a simple AI agent in 2026?

For a focused internal or customer-facing assistant with limited integrations, a realistic planning range is around $5,000 to $15,000, though the number climbs quickly when retrieval, security, or action-taking is added.

What creates the highest hidden costs?

Integrations, governance, retrieval infrastructure, ongoing support, and adoption work are often bigger cost drivers than the base model itself. Pinecone minimums, platform credit systems, and action-based pricing all reinforce that point.

Are AI agent subscriptions cheaper than custom development?

They can be cheaper at the start, especially for standard use cases. Over time, high usage, deeper integrations, and seat expansion can narrow that gap. That is why the total cost of ownership matters more than the entry price.

How should US businesses think about compliance?

They should start with data ownership, model training defaults, access controls, monitoring, and lifecycle risk management. NIST’s framework is a strong baseline, and enterprise vendor privacy terms should be reviewed during procurement.

What is the best next step before asking for a quote?

Define one workflow, one outcome metric, one set of connected systems, and one target user group. That usually produces a much more accurate estimate than asking for “an AI agent” in the abstract.